Calcifying Trust, Part I

Last month I updated my website to be readable without Javascript. I alluded to some of the reasoning for that in the blog post where I described how I modified my website. After chatting with a colleague, I realized I could be less oblique on why I modified my website. This is that belated blog post.

tl;dr: Javascript enables affordances like arbitrary interactivity, which can enhance the user experience of the web. However, these same features increase the surface attack area and can be used to violate user privacy. I end with a discussion of the tradeoffs of using Javascript to deter LLM training.

What is a browser?

While this post is about why I made enabling Javascript optional for folks reading my website, the real core point of contention is what a browser is by way of what it ought to do and ought not to do.

Modern browsers are incredibly complex and basically run their own operating system. They bundle together several pieces of software that perform different functions, not all of which may be useful to most users.1 Ultimately though, the basic function of a browser is as a UI for web pages.

Interacting with web pages

Most users have Javascript on by default. However, Javascript is not necessary for accessing web pages in the first place!2 So what is Javascript and why is it in our browsers? Javascript is a "Turing complete" programming language. By "Turing complete" we just mean that it can execute arbitrary computable functions: anything you can write in any other programming language, you can write in Javascript. All modern browsers are bundled with a Javascript runtime, which reads and executes Javascript that's (1) embedded in the HTML in script tags and (2) hosted elsewhere, but linked to from the HTML. which your browser can execute.

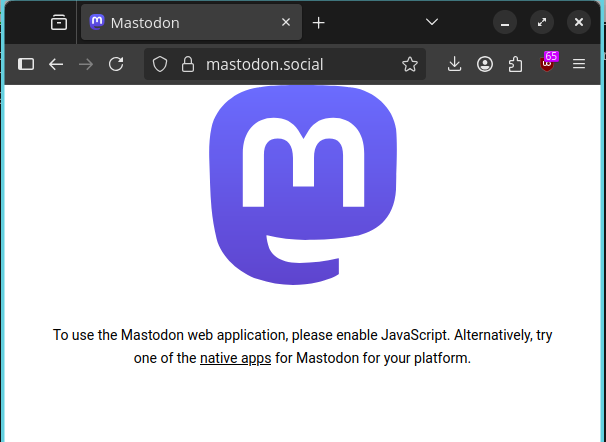

You do not need Javascript to browse the web. Materially, your web experience may be worse for it, especially if site was designed to make heavy use of Javascript for relevant content. For example, this is what it looks like if you try to access mastodon.social with Javascript disabled:

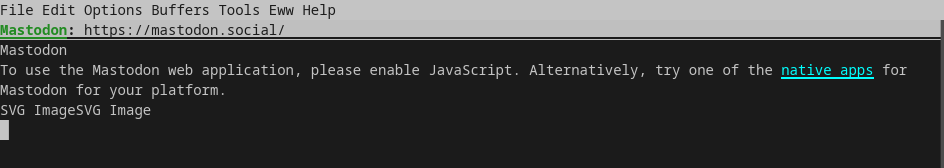

In fact, there are text-only browsers that cannot run Javascript, since they have no Javascript engine attached; for example, emacs's built-in lightweight web browser, eww:3

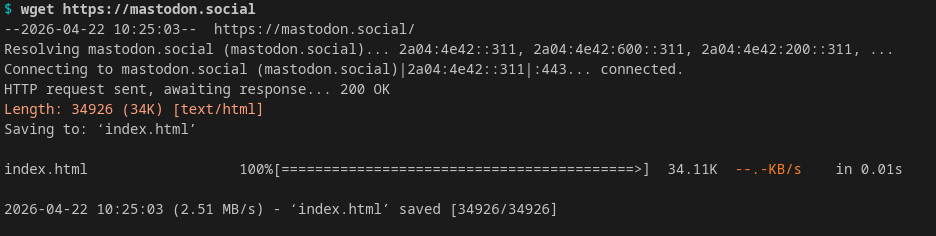

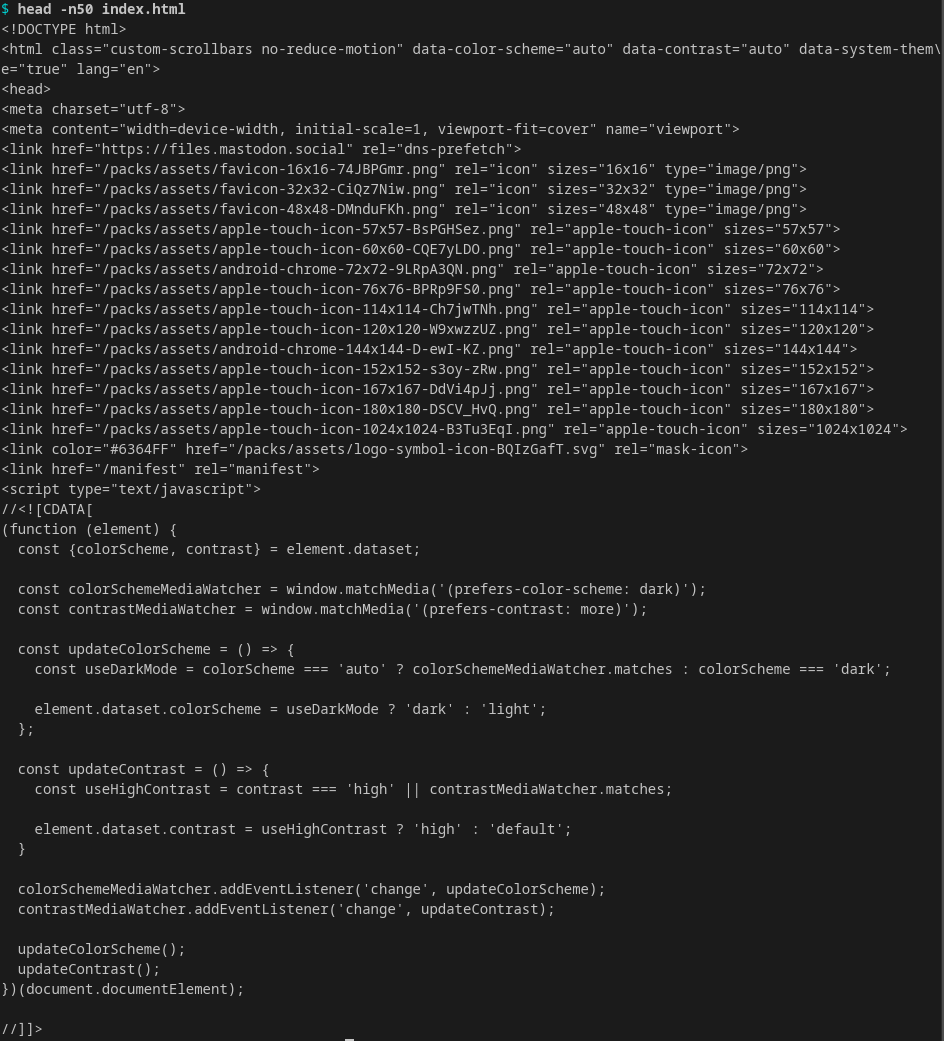

Finally, as stated above, browsers are UIs for viewing web pages. As UIs, their primary purpose is for human convenience. Critically, you don't even need a browser to access the web — you can grab an arbitrary webpage through command-line programs/utilities like wget:

Javascript is feature creep

I bring all of this up to highlight the fact that only HTML is strictly necessary for interpreting web pages.4 While we tend to think of HTML as primarily being about layout of the page (i.e., the Document Object Model, or DOM), HTML's critical functional capacity is in the parts the end-user doesn't see. HTML contains critical information for how to interpret streams of data (e.g., encoding information in the head), how to link data (e.g., the link tags in the head), and how to handle certain user interactions (e.g., opening a new page or scrolling to a particular section when a user clicks on a link).

Wherefore Javascript? or Worse is Better (again)

Classically, HTML did not provide much in the way of support for interativity. While this is related to the fact that HTML is not Turing complete, it is not an equivalent concept: you can have some kind of bounded interactivity without needing to be able to execute arbitrary code. HTML was originally designed to be static, providing the bare bones necessary to navigate the web. Over the years, end-users have desired dynamic features for websites, from the blink tag to the modern web. Along the way, there were several candidate "browser-based" languages that offered solutions; Javascript is the one that won out.5

Interactivity considered desirable

So why would website designers desire interactivity or dynamism? On the inocuous side of things: dynamic, interactive, or responsive elements of a webpage can lead to a better user experience. This includes features like interactive charts and collapsable elements of a page. Critically, the dynamic angle is nearly synonymous with executing user-responsive code client-side (i.e., locally on your machine), as opposed to server-side (i.e., the host web page's machine (and the article I linked to in Footnote 1 again explains this nicely, for those who are unfamiliar with the distinction).

HTML has definitionally always rendered client-side. Certainly some of the dynamic and interactive functionality that Javascript enables can be implemented server-side. There are two main reasons to not implement these features server-side: (1) if their server is "far away" from the client, the user will notice latency in their interactions, which degrades the user experience and (2) each server has finite bandwidth for requests — i.e., websites that push this functionality client-side are able to handle more unique clients.6

So overall, client-side dyanamism is the design we have landed on.

Enriching uranium

Now on to the more nefarious side of things. The more features of the host environment (i.e., the browser, your operating system) your language runtime can access, the more control and surveillance you can integrate into your website's functionality, when accessed by a Turing complete language.

Problem: "good" feature access can be used for ill. Let's consider access to two elements of the execution environment:

- Cookies — small bits of text data that your browser stores locally on your computer, and

- The

hoverevent — a link between user input (cursor movement) and the DOM.

Suppose you're shopping on an e-commerce website that has a bunch of search parameters. Without cookies, the user will need to re-enter their search parameters data every time they hit the back button. Attaching a tooltip to the hover event allows the dynamic display of information without polluting the back button. These are both desirable features.

Unfortunately, we could use also use cookies to display different information, depending on user features. We could track user cursor interactions and execute code locally that updates the price dynamically. Not great!

Decidability

Okay, so since we execute Javascript client-side, how about we check to make sure the Javascript isn't doing anything illegal or anti-competitive or anti-consumer?

Now this is where the Turning-completeness of Javascript comes into play: because we can encode arbitrary computations in Javascript, we can't answer arbitrary questions about Javascript source code, due to Rice's Theorem (an equivalent theorem to the more famous undecidability of the "halting problem"). The upshot is that, so long as we allow arbitrarily expressive code to execute, we will we be forever engaged in an arms race via the detection and evasion of unwanted behavior.

Another way

Thus is the state of the world in Javascript land right now (and specifically what ad blockers and ad blocker blockers are all about).

However, something else has happened in the background: the HTML standard has adapted at a slower pace, gradually adding features that were previously only possible through Javascript and/or CSS. Critically, HTML does this without enabling arbitrary computation. The two Javascript libraries that I use in my posts — Mermaid and MathJAX — translate their inputs into HTML elements. Since they aren't dynamic once rendered, there is no usability reason to have them computed client-side.

Collective action

I presented my original justification in a more politically neutral way: removing the reliance on Javascript make my website legible to people who chose not to run Javascript. However, there's a larger political statement behind it as well.

Even if we trust the authors of Mermaid and MathJAX to behave in secure and privacy-respecting ways, the fact that my website required them pushed the burden on the end-user to decide whether to trust them and me. I added yet another illegible-without-Javascript-enabled website to the world and in doing so contributed to the hegemony of V8 and all it enables. Want to learn React? Well now all the setup tools for new users ship with web vitals. Static site generators ask for your Google Analytics information, making newcomers feel like this is normal.

My goal here is largely a matter of harm reduction. I still host on Microsoft server's (for now). Still, every little bit counts.

LLMs

I can't finish this post without acknowledging that static sites are far easier and more efficient to scrape than dynamic ones. I stopped blogging in 2022? 2023? in part because I felt dismayed by the prospect of being a content generator for the plagairism machine. Because I haven't run analytics on my website in a long time, I really have no idea whether anyone actually reads it, so this was more a morale issue than anything else. I ultimately decided that contributing back to the open web was more important than becoming soylent green, but I do want to acknowledge the allure of sticking it to LLMs.

Put another way: it's more important for readers to trust me than it is for me to "trust" that someone won't use my work in ways I don't approve.

Teaser

This post is part one of two, since it's already quite long and I'd wanted to get to discussing why the Mastodon comments aren't static, some thoughts on telemetry, and what I mean by "calcifying trust" (a phrase I wrote down when discussing the move to a mostly static website with Chris Martens).

-

As an example, most browsers now have debugging and developer tools; I suspect the vast majoritiy of users will never access these tools. ↩

-

I mean this of course in terms of minimal requirements for browsing the web. Obviously as I explain in this post, many websites choose to make it so that some data is only accessible if you have Javascript enabled. Critically, this is a choice in a way that HTML is not a choice. ↩

-

I should note here that text-only browsers also cannot run CSS, the language used for "styling" websites so they have different fonts and different colors and display properly on different devices. There are claims that modern CSS is also Turing complete. As I discuss later in the blog post, Turing completeness is important for the argument against requiring Javascript for two reasons: (1) the Javascript engine can interact with the execution environment, saving state, and (2) Rice's Theorem. Because CSS has more limited access to the host environment's resource and because the transition function relies on user interaction, the threat model is quite different and thus the arguments I make are not necessarily applicable. ↩

-

"But what if my web page is plain text?" you ask, to which I respond, "then ontologically it's plain text that lives in a web-accessible location, not a web page." ↩

-

For an excellent overview of Javascript's rise to dominance, see this 2016 Vice article on the death of Java applets, which I fondly remember from my high school physics class at the turn of the century. ↩

-

Side note: pushing computation client-side has the additional effect of pushing the cost of computation onto the client. You are using your compute power, paying with your electricity, degrading your battery and other hardware to perform computations that would otherwise be borne by the website's owner. ↩